What Type of Memory Is Constantly Updated?

Explore which memory type is constantly updated in computers, how volatile memory works, and how it affects performance, power, and system design.

Volatile memory is computer memory that loses its contents when power is removed and is constantly updated during program execution.

What is volatile memory and why it matters

Volatile memory is computer memory that loses its contents when power is removed and is constantly updated during program execution. If you’ve wondered what type of memory is constantly updated during normal operation, the answer is volatile memory. According to Update Bay, volatile memory matters because it stores the data the CPU uses in real time, enabling programs to run smoothly and quickly.

This block provides a high level view of why volatile memory sits at the heart of modern computing. It is the fast workspace that holds active instructions, temporary results, and frequently accessed data. The speed of volatile memory directly influences how fast software can respond to user input and how responsive the operating system feels. While nonvolatile storage like SSDs provides long term retention, volatile memory offers near instant access, which is why system architects optimize for low latency and high bandwidth here. In short, volatile memory is the immediate, temporary workspace that powers day to day computing.

Volatile vs non volatile memory: core difference

Most people encounter two broad categories of memory when they study computer systems: volatile memory and nonvolatile memory. The key difference is persistence. Volatile memory loses its contents when power is off, while nonvolatile memory retains data without power. This distinction shapes how and where data is stored during different phases of operation. Volatile memory is designed for speed and frequent updates, serving as the active workspace for the CPU, while nonvolatile memory prioritizes retention and durability, used for long term storage like documents, system software, and installed applications. In practice, both types work together: the CPU writes data into volatile memory during computation, and periodic or background processes move important results to nonvolatile storage so they survive a shutdown. Understanding this boundary helps explain why some operations feel instant while others take longer to complete.

How volatile memory is constantly updated

The term constantly updated describes the ongoing read and write activity that happens as software runs. volatile memory supports rapid data refresh as the CPU fetches instructions and stores intermediate results. The memory controller orchestrates a sequence of reads and writes, while caches act as speed boosters that keep frequently used data close at hand. Even idle operating systems may trigger background memory activity to prepare for tasks, causing updates you notice as smoother transitions and snappier interfaces. Update Bay analysis, 2026, notes that these updates influence latency, bandwidth, and power dynamics in real world workloads. In other words, every keystroke, rendering pass, or background task prompts memory changes that shape overall system performance.

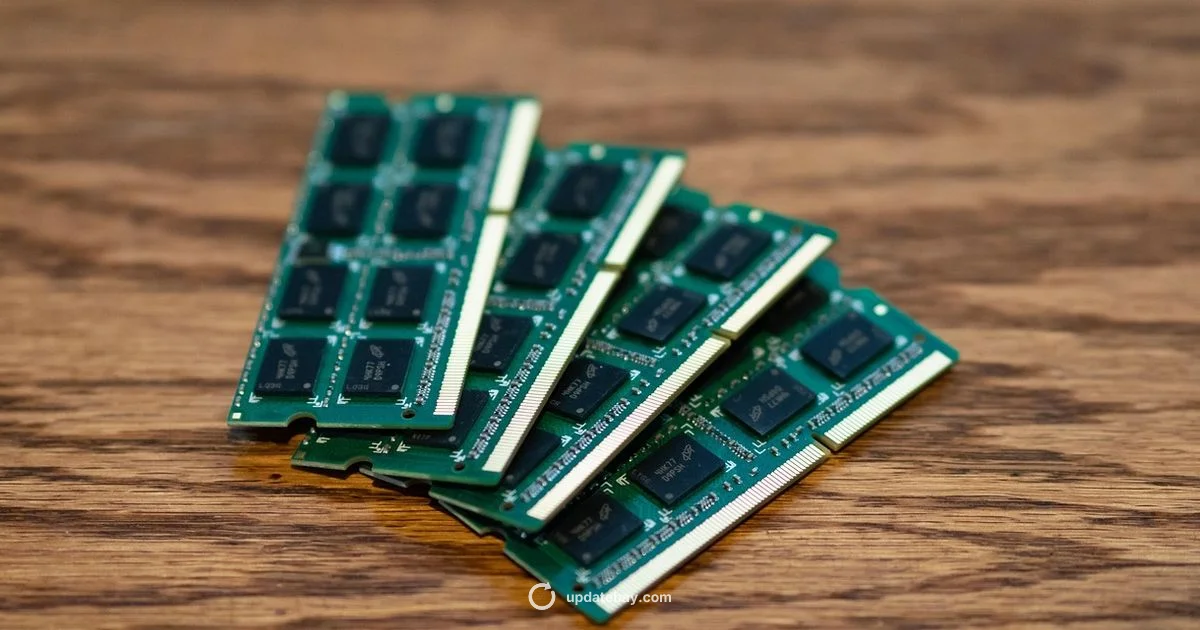

Types of volatile memory: DRAM vs SRAM

The main forms of volatile memory are dynamic RAM (DRAM) and static RAM (SRAM). DRAM stores each bit as an electrical charge in a capacitor and relies on a periodic refresh to keep data alive, which means it is constantly updated at a hardware level to prevent data loss. SRAM, by contrast, uses a different cell design that does not need frequent refreshing, making it faster per access but more expensive and space hungry. Because DRAM is denser and cheaper, it forms the bulk of main memory, while SRAM is typically used for cache and small, fast buffers. The choice between these types involves tradeoffs among speed, power, and cost. In modern systems, layers of volatile memory work together to balance latency and capacity while maintaining reliability under diverse workloads.

Real-world implications: performance, power, endurance

Volatile memory shapes performance in tangible ways. Latency and bandwidth determine how long applications wait for data, affecting game frames, video playback, or large data analysis. Power consumption scales with how often data is updated, which matters for laptops and mobile devices where battery life is critical. Endurance, while more commonly discussed for nonvolatile memory, also appears here in terms of wear on memory banks and heat generation when workloads stay constant. Efficient memory management—such as appropriate memory sizing, avoiding unnecessary background processes, and using modern OS features—helps reduce perceived lag and delay. In data center servers, memory configuration influences how quickly customers see results, how many tasks can run concurrently, and how energy is used at scale. The practical upshot is simple: faster volatile memory usually yields a quicker, smoother user experience, but it can drive higher power demands if not managed wisely.

Common misconceptions about memory that is constantly updated

A common myth is that all memory is the same and always accessible at any moment. In reality, different memory regions have distinct purposes and access times. Another misconception is that updating memory is always expensive; in many cases, modern hardware and software pipelines keep updates efficient. Some users assume volatile memory can retain data after shutdown; this is not true by design. The term volatile emphasizes that data lives only while power is supplied. Finally, people sometimes confuse memory updates with storage operations; updates happen inside the active workspace, while long term changes are saved to nonvolatile storage.

How memory updates affect software performance

Software performance relies on how quickly and predictably data can move in and out of memory. Caches reduce access times by keeping hot data close to the processor, while paging and virtual memory systems swap data in and out of volatile memory as needed. Efficient memory usage helps reduce stalls, improve frame rates, and speed up data processing. Developers optimize algorithms, allocate buffers wisely, and profile memory access patterns to minimize cache misses. The OS also plays a crucial role, scheduling memory pages to keep essential processes responsive and ensuring background tasks do not thrash the active workspace. By understanding memory updates, you can tailor software design to harness the strengths of volatile memory while avoiding unnecessary traffic that slows things down.

Choosing memory types for devices and systems

When selecting hardware for desktops, laptops, servers, or embedded devices, you should consider workload characteristics, power budgets, and cost constraints. For tasks dominated by interactive use, higher memory bandwidth and lower latency can deliver noticeable gains. For archival roles or devices with strict power limits, a mix of memory types and clever caching strategies may be more appropriate. Memory capacity plans should account for typical peak usage, operating system overhead, and the overhead of memory-intensive applications. Firmware and BIOS options influence memory initialization and stability, so keep firmware current and enable features like memory refresh controls when appropriate. In practice, the right volatile memory configuration aligns with your performance targets and energy goals while respecting budget and physical constraints.

The future of volatile memory and best practices

The design of volatile memory continues to evolve as system demands shift toward greater speed, larger caches, and smarter memory hierarchies. While nonvolatile memory advances at a complementary pace, volatile memory remains essential for real time processing. Consumers and enterprises can pursue best practices such as balancing memory size with workload needs, enabling efficient memory compression, and keeping drivers and firmware up to date. Embracing modern memory management strategies helps ensure snappy software, responsive devices, and energy efficiency. The Update Bay team recommends reviewing your memory configuration periodically and testing under representative workloads to identify opportunities for improvement.

Frequently Asked Questions

What is volatile memory and why is it constantly updated?

Volatile memory is a type of computer memory that loses its contents when power is removed. It is constantly updated as the CPU reads and writes data during program execution, making it the fast workspace for active tasks.

Volatile memory is fast, temporary memory that loses data when power is off. It updates constantly as programs run.

Is volatile memory the same as RAM?

In common usage, yes. RAM is volatile memory used for active data and instructions. Not all volatile memory is RAM, but RAM is the standard form of volatile memory in most personal computers.

Yes, RAM is the typical volatile memory used for active data.

What happens to data in volatile memory when power is lost?

When power is removed, volatile memory loses all stored data. That is why nonvolatile storage is needed for long term data retention and why systems back up important information.

Data in volatile memory is lost when power is removed, so long term storage is essential.

What are DRAM and SRAM, and how do they differ?

DRAM stores each bit as charge in a capacitor and requires periodic refresh, making it dense and cost effective but slightly slower. SRAM uses a different circuit to hold data and is faster but more expensive and larger.

DRAM needs refreshing; SRAM is faster but pricier.

How can software optimize memory updates for better performance?

Software can improve performance by using cache friendly data structures, minimizing memory allocations, and leveraging operating system memory management features. Profiling tools help identify slow memory access patterns and reduce cache misses.

Use cache friendly layouts and profile memory access to reduce slowdowns.

Are there future memory technologies that could replace volatile memory?

Emerging memory technologies aim to bridge persistence and speed, but volatile memory will likely remain essential for real time tasks. Expect continued innovations in caching, memory hierarchies, and new types that blend speed with persistence.

New memory designs aim to combine speed and persistence, but volatile memory will stay important.

What to Remember

- Identify volatile memory as memory that loses data without power.

- Differentiate DRAM and SRAM tradeoffs for speed and cost.

- Monitor memory bandwidth to optimize system performance.

- Balance power use with performance in memory heavy tasks.

- Update firmware and OS memory management for efficiency.